On February 28, 2026, the United States and Israel launched a large-scale joint airstrike on Iranian nuclear facilities and military infrastructure under the codename “Operation Epic Fury.” The attack came just two days after nuclear negotiations in Geneva collapsed on February 26. What drew even greater attention was the speed and precision of the operation. Explosions occurred almost simultaneously across Iran, including Tehran, Isfahan, and Karaj, and coordinating an operation of this scale within such a short period would have been nearly impossible through human judgment alone. For this operation, U.S. Central Command (CENTCOM) reportedly used Palantir’s Maven Smart System to process massive volumes of data collected from satellites, drones, reconnaissance aircraft, and electronic signals, and Anthropic’s Claude was integrated into the system to support target identification and battlefield analysis. Modern warfare has now reached a stage where calling it an “AI war” would not be an exaggeration. Artificial intelligence is no longer simply a tool that makes work easier, but a technology that has reached a position where it can influence decisions that ultimately affect human lives.

This development has also brought a new debate to the surface: how far AI should be used in warfare. In this article, we examine how AI in the defense industry is actually being used today and explore the tensions that exist between technology companies and governments in the process.

Now There Is No War Without AI

The recent U.S.–Iran conflict illustrates a symbolic moment. Target selection, threat evaluation, and coordination of air operations at a level previously difficult to imagine have become possible through real-time data analysis and algorithm-based decision support.

Since the war in Ukraine, many countries have begun promoting the concept of “software-first defense” almost as an official slogan. The growing demand for data and AI capabilities has become difficult for traditional defense contractors alone to handle, and software and sensor-focused defense startups such as Palantir and Anduril are filling that gap, gradually reshaping the landscape of the defense industry.

Today, the use of AI in the defense industry is no longer an optional feature applied to a few individual weapons systems. Instead, it has become a foundational structure that runs through the entire process of warfare, from planning and execution to evaluation after operations are complete. The recent U.S.–Iran conflict is simply one of the most recent and extreme examples showing how this structure is already being implemented on real battlefields.

Five Major Areas Where AI Is Used in the Defense Industry

1. Intelligence, Reconnaissance, Surveillance (ISR), Command and Control (C2) – Digital Battlefield Map Created with AI

In modern warfare, one of the first areas where AI is considered indispensable is ISR and command control. Satellites, reconnaissance aircraft, drones, radar systems, and communications equipment generate enormous volumes of data, and it is practically impossible for humans to process this information alone.

AI collects and refines data from multiple sensors and automatically matches information across the same locations and timeframes, creating a digital battlefield map that shows what is happening, where it is happening, and what activities are taking place. Object recognition, change detection, and pattern analysis models identify unusual signals first, after which human analysts verify the results and make final judgments.

A representative company in this field is Palantir. Palantir’s Gotham and Foundry platforms integrate a wide range of military and intelligence data to create a unified operational picture, and they are known to operate in conjunction with systems related to the U.S. Department of Defense’s Project Maven. More recently, the company introduced AIP (AI Platform), which allows users to query battlefield data in natural language and combine it with simulations to generate new insights, effectively functioning as an AI staff assistant.

As a result, commanders no longer need to monitor individual sensor screens one by one. Instead, they can view major risks, potential targets, and changes over time through a single integrated situation display. Many analysts believe that similar AI-based ISR and C2 systems likely played a central role in the recent U.S.–Iran conflict.

2. Autonomous and Unmanned Platforms – A Battlefield Where Drones and Robots Operate

In the past, unmanned systems involved far more human participation than their name suggested. Operators remotely controlled the platforms and humans monitored screens to make decisions. Today, however, that model is rapidly changing. AI is increasingly taking responsibility for route planning, obstacle avoidance, target detection, and even coordinated swarm flight.

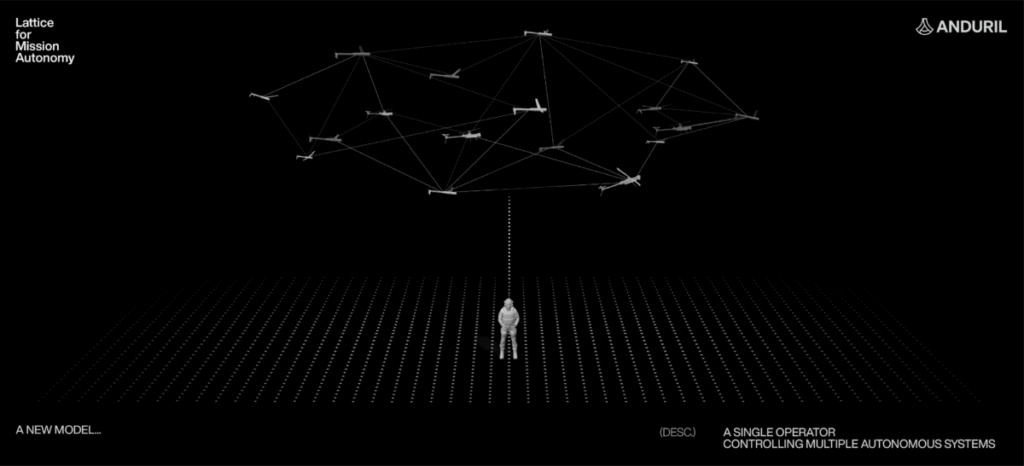

One company that clearly represents this trend is Anduril Industries in the United States. Through a software platform called Lattice OS, Anduril connects reconnaissance drones, interceptor drones, and maritime and ground unmanned platforms into a single network. Sensor data collected by each platform is shared in real time, and in autonomous mode AI assists with mission planning and certain engagement decisions. Shield AI approached the challenge from a slightly different direction. Its Hivemind system functions as an AI pilot software that allows drones to continue flying and searching for target areas even when GPS signals are unavailable or communications are disrupted. The technology was designed specifically with real battlefield conditions in mind, where electronic interference is common.

South Korea is also moving in the same direction. Companies such as Hyundai Rotem are developing next-generation unmanned combat vehicles and autonomous armored vehicles equipped with AI-based navigation and target tracking functions. The military is also experimenting with deploying robots for frontline reconnaissance and mine detection missions. Gradually, some of the most dangerous missions that once required soldiers to be physically present are being replaced by platforms equipped with AI.

3. Strike and Defense – Missile Defense and Loitering Munitions

If you imagine a situation where missiles are approaching simultaneously, it becomes clear why AI is needed. When dozens of threats appear at once, it is difficult for human operators to analyze and prioritize interception decisions quickly enough. AI analyzes radar and sensor data in real time, identifies incoming threats, predicts their trajectories, and recommends which interception systems should be used and in what order. Human operators then review and approve these recommendations.

Loitering munitions represent another form of AI application. These weapons circle above a target area and strike at the optimal moment, combining reconnaissance and attack capabilities within a single platform. A representative example is Switchblade developed by the U.S. company AeroVironment. When operators deploy the system above a target area, AI identifies potential targets and proposes them, after which the operator ultimately authorizes the strike.

Israel’s IAI has taken this concept further with systems such as Harpy and Harop. These platforms can detect specific radar signals and autonomously track and attack their source, which is why they are often described as munitions that search for targets. Many countries still maintain the principle that lethal strikes require final human approval. However, from a technological perspective, the barrier to fully autonomous attacks is already very low, which is one reason ethical debates surrounding AI in the defense industry continue.

4. Logistics, Training, and Simulation – AI Changing the Back End of War

Although these areas may not appear as dramatic as weapons systems on the battlefield, logistics and training ultimately determine how long a military can sustain operations. AI is quietly improving efficiency in these areas.

In logistics and maintenance, predictive maintenance based on sensor data attached to equipment is a representative example. AI analyzes operational data to predict failures before they occur. Demand forecasting models are also used to estimate when and how many parts will be needed and to identify risks within supply chains in advance. Preventing a tank from stopping in the middle of a conflict due to a missing component may seem simple, but it is actually a critical issue for military operations.

In training and simulation, AI increasingly plays the role of a virtual enemy. In the past, simulated opponents often followed fixed patterns designed by humans. Today, reinforcement-learning-based AI agents move unpredictably in ways that resemble real adversaries. These AI opponents are increasingly integrated into training simulators for fighter pilots and ground commanders, allowing some expensive live-fire or live-maneuver exercises to be partially replaced by virtual training. Being able to run more scenarios more frequently and at lower cost is fundamentally changing the quality of military training.

5. Border Security, Public Safety, and Domestic Security

The use of AI in the defense industry is not limited to the battlefield. AI-based defense and security technologies are also being deployed in border control, demilitarized zones, maritime boundaries, ports, airports, and urban security environments.

Computer vision systems analyze CCTV and drone footage in real time to detect intrusions or unusual behavior. Autonomous patrol robots combine thermal imaging, lidar sensors, and AI to repeatedly monitor large areas and immediately notify human operators if anomalies are detected. In South Korea, intelligent surveillance cameras and autonomous monitoring robots are already being tested near the military demarcation line, and the use of dual-use security robots is gradually expanding.

However, this area also raises uncomfortable questions. If AI systems constantly monitor citizens even during peacetime, where should the line between national security and privacy be drawn? As the technology advances rapidly, society will increasingly need to address this question as well.

Global Key Players and Real-World Products

Which companies are actually active across the five areas discussed above? Below is a brief overview of representative companies and technologies by country.

United States

- Palantir

: Known as one of the most prominent ISR and command-and-control data platforms. Through systems such as Gotham and AIP, the company provides infrastructure that integrates and analyzes battlefield data for the U.S. Department of Defense and allied militaries.

- Anduril

: A company that demonstrates a different approach from traditional defense contractors by connecting drones and unmanned platforms through a software platform called Lattice OS.

- AeroVironment

: A specialized manufacturer of small drones and strike systems. Its Switchblade loitering munition gained real-world operational experience during the war in Ukraine.

Israel and Turkey

- IAI

: Often considered a pioneer of autonomous and semi-autonomous loitering munitions such as Harpy and Harop. These systems demonstrated their effectiveness during the Azerbaijan–Armenia conflict.

- Baykar

: A Turkish company that reshaped the global mid- to low-cost drone market with systems such as TB2 and Akıncı. Its drones have been exported to more than 40 countries and gained global recognition during the war in Ukraine.

China and Russia

- DJI (China)

: The world’s leading civilian drone manufacturer. According to reports from NPR and Army Technology, both Russia and Ukraine have widely used commercial models such as the Mavic as reconnaissance and tactical drones capable of dropping small munitions. In the United States, the Department of Commerce placed DJI on the Entity List in 2020 over national security and human rights concerns. According to FCC documentation, DJI was later added to the FCC Covered List in late 2025, effectively preventing new products from entering the U.S. market.

- Russia

: Russia is often considered to lag behind Western countries in advanced drone technologies. However, according to CNN reports, Russia has transferred Shahed drone technology from Iran and expanded domestic production while also securing dual-use components from China. This approach reflects a strategy of compensating for technological gaps through alliances and supply networks.

South Korea

- Hanwha Aerospace

: According to Asiae reports, the company is introducing AI-based target identification and fire control functions into air defense and guided missile systems. Its laser anti-air weapon “Block-I” has attracted attention as an asymmetric capability because each shot costs only around 2,000 KRW in electricity, significantly cheaper than traditional missile interceptions.

- Hyundai Rotem

: The company is working on projects that apply autonomous driving and AI-based target tracking technologies to next-generation tanks and unmanned combat vehicles. Through an MOU with Shield AI, Hyundai Rotem aims to develop networks in which drones share reconnaissance information in real time with tanks and self-propelled artillery.

- Korea Aerospace Industries (KAI)

The company is pursuing the development of manned–unmanned teaming systems linked to the KF-21 fighter. It plans to gradually implement the “loyal wingman” concept, in which unmanned aircraft such as ALE or AAP are controlled by the KF-21 during operations.

What these companies have in common is that AI is no longer confined to research laboratories. It has already become part of real products delivered to militaries and deployed in operational environments. If you would like to discuss AI in the defense industry directly with current or former experts from these companies, please contact us to request an expert consultation. In an era where military competition is increasingly defined by AI capabilities, waiting too long may mean falling behind.

The Current State of AI Seen Through the U.S.–Iran Conflict

Returning to the U.S.–Iran conflict discussed at the beginning of this article, the case provides a vivid example of how AI can dramatically improve efficiency in warfare. On the first day of the airstrikes, the U.S. military reportedly struck more than 1,000 targets, demonstrating how AI can process target identification and strike coordination within minutes. In earlier conflicts, the process from reconnaissance to bombing could take months. AI has compressed that entire timeline into real time.

First, AI maximized efficiency during the operational preparation stage.

Palantir’s Maven Smart System and Gotham integrated satellite, drone, and signal intelligence data to automatically identify Iranian nuclear facilities, IRGC command centers, and air defense systems. Anthropic’s Claude analyzed this data to evaluate the strategic value of each target and propose optimal prioritization. What would previously have taken human analysts days to process could now be completed within hours. By structuring and visualizing massive amounts of information in real time, commanders were able to make decisions quickly through intuitive digital maps.

Second, during the execution phase, AI coordinated multiple platforms simultaneously.

Stealth aircraft such as the B-2, drones, and cruise missiles were connected through a network that allowed them to penetrate air defenses without collision or operational conflict. Mission planning software supported by Claude optimized flight paths and enabled real-time information sharing, making synchronized strikes possible. AI handled the complexity of hundreds of weapons systems operating at once, allowing U.S. forces to destroy key targets before Iran could organize a meaningful response.

Third, in the simulation phase, AI evaluated tens of thousands of possible retaliation scenarios and recommended the most effective strike sequence.

Claude analyzed the movement patterns of Iranian leadership and military infrastructure to calculate potential outcomes, allowing the U.S. military to minimize risk while maximizing strategic impact. In each stage of the operation, AI effectively accelerated the decision-making process.

Ultimately, the U.S.–Iran conflict demonstrates that AI has already become core infrastructure on the battlefield, increasing the speed and precision of military operations to levels that were previously unimaginable.

We Will Not Be Divided – Red Lines for Military AI

At roughly the same time as the U.S.–Iran conflict, another front emerged within Silicon Valley around the issue of military AI. Employees from companies such as Google and OpenAI published an open letter titled “We Will Not Be Divided,” calling for their technologies not to be used for large-scale surveillance or fully autonomous lethal weapons.

The letter included two main demands.

- Opposition to large-scale, continuous surveillance using AI, especially when directed at a country’s own citizens.

- Opposition to the development and deployment of fully autonomous lethal weapons capable of making life-and-death decisions without human intervention.

Anthropic has previously formalized similar principles. During negotiations with the U.S. Department of Defense, the company presented two red lines: it would not participate in surveillance of U.S. citizens using Claude, and it would not support the operation of fully autonomous lethal weapons. Despite these positions, Claude was reportedly deeply involved in target selection and operational simulations during the Iranian strike. Although the Trump administration once ordered the suspension of the system, the lack of viable alternatives ultimately led to its continued use. This situation illustrates that even when companies attempt to set ethical boundaries, those boundaries may have limited meaning if governments choose not to recognize them.

This issue is also difficult for the defense industry to ignore. If military operations become heavily dependent on a particular AI system, the sudden withdrawal of cooperation by a technology company could disrupt operations themselves. When these events are viewed together, several critical questions emerge.

- At what point does the issue remain a matter of technology and procurement?

- At what point should ethical refusal from developers and companies be recognized?

- What kind of legal and institutional framework should governments build around these questions?

These are not concerns limited to the United States. Any country preparing for AI-driven defense capabilities will eventually face similar challenges.

The Future of War Ultimately Remains a Human Choice

In conclusion, the recent conflict between the United States and Iran provides a powerful example that AI has already become central to modern warfare. From target selection and operational planning to post-operation evaluation, there are now very few stages where AI is not involved. At the same time, the conflict leaves us with an uncomfortable question: how far should we allow this automation to go?

The use of AI in the defense industry will almost certainly continue to accelerate. More advanced weapons, more efficient logistics systems, and more precise defense technologies will continue to emerge. However, the decision of whether to press the button, whether that decision should be handed over to AI, and who should ultimately take responsibility for those choices still belongs to humans. War is not a beta test. It is a reality in which hundreds, thousands, or even tens of thousands of lives may be at stake.

How far should we allow AI to be used in the defense industry? And which countries and companies, guided by what principles, can truly provide safer and more trustworthy security? These questions will remain challenges that humanity must continue to confront.

Sources

- https://americanbazaaronline.com/2026/02/28/ai-war-in-iran-and-the-sovereignty-struggle-over-autonomous-technology-476067/

- https://www.mk.co.kr/en/world/11976016

- https://theconversation.com/the-pentagon-strongarmed-ai-firms-before-iran-strikes-in-dark-news-for-the-future-of-ethical-ai-2771

- https://www.businessinsider.com/openai-google-employee-petition-military-ai-use-anthropic-pentagon-defense-2026-2

- https://www.change.org/p/ai-for-us-not-against-us-we-the-people-will-not-be-divided

- https://www.tekedia.com/openai-and-google-employees-unite-in-petition-against-unrestricted-military-use-of-ai-citing-mass-survei

- https://lodi411.com/lodi-eye/anduril-and-palantir-ai-enabled-transformation-of-us-defense

- https://nstxl.org/defense-tech-trends/

- https://www.twobirds.com/en/insights/2026/ai-and-other-technological-advancements-in-defence-,-a-,-security

- https://eu.36kr.com/en/p/3707157440557190

- https://www.armadainternational.com/2024/09/weapons-that-watch-and-wait/

- https://en.wikipedia.org/wiki/AeroVironment_Switchblade

- https://efile.fara.gov/docs/6491-Informational-Materials-20220319-644.pdf

- https://asianews.network/south-korea-accelerates-ai-push-in-next-generation-weapons-programs/

- https://www.koreaherald.com/article/10602299

- https://www.koreatimes.co.kr/southkorea/defense/20260202/lawmakers-of-rival-parties-propose-bill-to-promote-ai-driven-research-i

- https://en.yna.co.kr/view/AEN20251024004500320

- https://pulse.mk.co.kr/news/english/11447093

- https://www.uasvision.com/2020/12/21/dji-added-to-us-to-department-of-commerce-blacklist/

- https://www.army-technology.com/news/ukraine-buys-another-4200-dji-mavic-drones/

- https://www.npr.org/2023/03/21/1164977056/a-chinese-drone-for-hobbyists-plays-a-crucial-role-in-the-russia-ukraine-war

- https://www.bloomberg.com/news/newsletters/2026-03-05/iran-war-provides-a-large-scale-test-for-ai-assisted-warfare